Working with AI Agents

/ 9 min read

Table of Contents

I remember talking with my ex-manager Jatin about Vibe Coding and how cliched this term seemed back in March 2025, but our industry moves fast.

I have been pushing Claude Code, my tool of choice, to the edge. I have:

- burnt my hand with AI Agents by pushing bad code in production

- tried multiple AI agents to finally land on my current choice

- had immense success in moving fast by leveraging these tools

- learnt from others on how they are using it

- devised a flow that works pretty well for me

Let’s dive into how I came upon my choice of agent, using TDD and planning for improving the precise path to complete a job, sprinkling keywords to improve the “thinking”, configuring tools and much hyped MCP servers and finally I share a few of my frequently used prompts.

Why Claude Code?

Let’s rollback a little the first AI coding assistant that I used was GitHub Copilot. It was ahead of its time but has fallen behind now. Copilot was able to provide a very nice autocomplete support in the past and also the chat was useful enough to act as a pair programmer.

Even though the completions were good for common patterns found in codebases I stopped with autocompletion by AI extensions in my IDE altogether. The primary reasons were:

- It halted the activation of my flow state where I was continuously waiting for the AI to do its job which it does sometimes and sometimes doesn’t.

- There was also increasing reliance on autocomplete and AI correction which prevents me from learning the correct way of programming things, to develop muscle memory about patterns, methods, etc because if “I don’t write it, I won’t ever learn it”.

All these ideas were also gathered by others like Luciano in his blog: Why I stopped using AI code editors and DHH on Lex Fridman Podcast: Future of Programming. So no more auto complete in the IDE.

The way I use AI is in a separate window…because I can literally feel competence draining out of my fingers, that level of immediacy with the material disappears. - DHH

For sometime I tried using chat interface of Github Copilot for correcting code and asking for suggestion whenever required. I also tried Cascade by Windsurf in Intellij but that didn’t work out well because the agentic flow in these tools wasn’t working great at the time.

I never went ahead with Cursor because given it’s a VSCode fork the support for Java, my primary language work at work isn’t very well supported in it.

So my reluctance to learn a new IDE because of familiarity with shortcuts and controls coupled with not using auto completion is what lead me to Claude Code which had the basic features I needed:

- Auto Edit Mode

- Ability to run test and build commands for verification

Now, let’s see how I reached to my current workflow and what learnings were there in the process which helped me identify a better workflow incrementally.

Test Driven Development

TDD was introduced to me by my mentor Vicent when I did my LFX project with Vitess. In case you are not familiar TDD it looks as follows or otherwise skip ahead:

- Based on the requirement write the test cases for the feature/change that you are planning to make.

- Make use of skeleton classes and function with basic declaration which just make the test build but don’t implement the feature yet.

- Now run the tests and ensure that they are failing. This is what we define as the Red Phase.

- Go ahead with the implementation at the end of which all tests should be passing. This is Green Phase.

Now, TDD is what we should always be using in an ideal software engineering world but we don’t. So that is exactly what I asked claude code to do follow TDD.

TDD requires us to provide the agent with well-crafted requirements in our prompt which requires us also to think ahead which we would have done even if there was no LLM so why should this be any different.

Story of a Passing Test

The story behind choosing TDD is when I had accidentally pushed bad code to production and it was there for quite sometime. I had written the code myself but the reluctance of SWEs to write tests got the better of me and I offloaded it to claude.

It did write very well in terms of the test cases I was happy and the code got merged. Only later did I realize that my code was wrong and claude had force-fitted the assertions to ensure tests pass which is what I had asked it to verify.

That’s why I find that reversing the behaviour prevents force fitting of test cases to the code written as well gives us and Claude both a chance to think through requirements first.

Plan Mode

This was something I became aware of while reading the document made about prompting by the anthropic team around best practices for Claude Code.

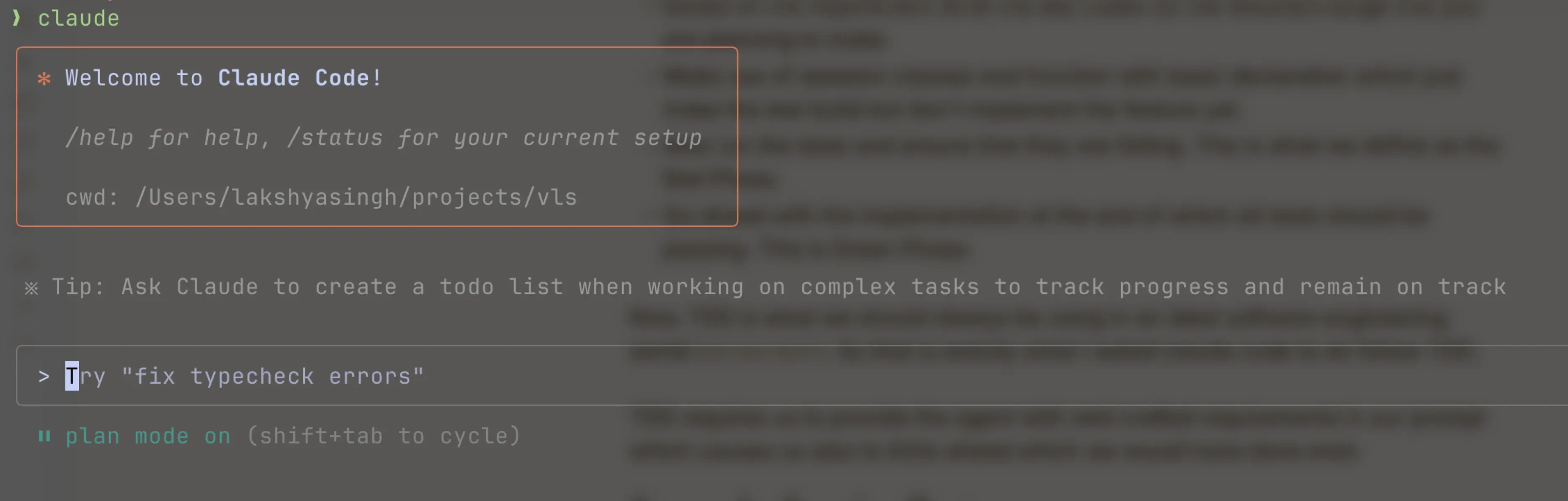

So before starting any task I will make sure to put claude into plan mode (Shift + <TAB>) based on the requirements provided, it generates a plan which we can reject and ask it to reiterate on until we are satisfied.

Using Keywords

The one important thing that best practices document mentions is the use of words like:

- think

- think hard

- think harder

- ultrathink

These start extended thinking mode for claude and are mapped to increasing levels of increasing budget so based on your pocket the complexity of task you can make sure to put one of these while asking it to generate a plan.

Reviewing the Plan

Getting claude to review my plan was something I picked up from twitter. This workflow is something I recently included in my flow, even though it requires a little more seconds.

So after claude has completed the plan creation and you are mostly happy with it:

- Approve the plan and ask it to move into edit phase

- Halt the edit phase using

Esckey - Ask it to write the complete plan in a markdown file

- Now using

/clearcommand, clear the context - Go back to plan mode, prompt claude to review the plan generated, identify any issues or misses and correct them

- Now once again

/clearthe context and in auto edit mode ask claude to implement the generated plan.

The above process helps in two ways it keeps the context window small as after reading already generated plan claude won’t go into exploration mode keeping its implementation limited to certain specific regions of code.

The second thing it helps with is the second reviewing claude prevents hallucination given its an extra layer of filter with fresh context.

Sub Agents

I had experienced this earlier where claude code was making use of running independent subagents to complete the plan faster but after a few updates stopped doing it. I also forget about this feature.

Recently one of my colleague Raymond pointed it out that sub agents is something that can help in context reduction as its not shared with main agent. While that is a great addon but the biggest one is explicitly asking claude to use sub agents for running independent tasks concurrently reduces the time to completion a lot.

Configuring Tools

Claude Code configuration can store details about what tools we allow it to use automatically without asking for confirmation and what is disallowed. Here is what it looks like

{ "permissions": { "allow": [ "Bash(bazel build:*)", "Bash(gh pr view:*)", "Bash(gh pr diff:*)", "Bash(ls:*)", "Bash(rg:*)", "Bash(grep:*)", "Bash(find:*)", "Bash(fd:*)" ], "deny": [ "Bash(rm:*)" ] }, "enableAllProjectMcpServers": false}Having an allowed set of tools allows you to spend less time monitoring the approval requests claude code will send.

The second thing that I found useful were configuring a few MCP server, they are:

- context7 provides latest documentation for libraries being used in the code.

- glean is useful for searching and fetching internal documents e.g. gdrive, confluence, jira, etc.

- playwright is particularly useful for frontend developments as it allows the agent to access browser view, console, etc.

Improving Context

The presence of a CLAUDE.md really helps claude to develop context on what code parts to work on, which tools to use, etc.

We have defined a lot of these specifications given that at Glean we use a monorepo but having only the ones important for my task keeping the context precise is generally better.

Predefined Prompts

I have some snippets defined in raycast that I quickly drop in for something like plan creation with TDD I use below prompt:

Write the comprehensive plan in plan-<SHORT_NAME>.md file first- what is the issue,- the goal,- the idea,- the implementation touching upon what all files to modify- and how to write test

You have to be as particular as possible in the plan.

Proceed in a test driven manner.- write tests first considering all the business requirements,- next ensure that build is passing using skeleton structs and functions- now ensure that tests are failing as we haven't completed the implementation yet.- finally write the implementation and make sure tests are passing now

Make sure to also include ways that will finally verify that our plan was executed properly and everything is in a good stateFor reviewing the plan I use the below one

Review @ and update any misses if its not complete or incorrect.

DON'T GO OVERBOARD WITH IDENTIFYING UNNECESSARY ISSUES.

Only focus on whether the implementation is complete and resolves the issue at hand or not. We don't want to be bothered with things like regression or performance testing without explicit plan requirements.Wrapping Up

I expect my workflow to change over time as and how the tools start responding to my current workflow but I expect less engaging workflows over time as LLMs gain more and more context about the way we work.

But for now its very important to treat them as those little interns who will run around doing random things if you don’t provide the precise requirements and context to complete the task.

That’s all for now, please do reach out to share your ideas on how you have had success in working with AI agents I am always looking for ways to be more efficient with these tools. I will see you on the other side of AI revolution.